Anika Meier. In your music videos, it’s not about visualization but about coupling, as in Das Atom spaltet die Jugend. The image follows the music, but not in an illustrative way.

Marion Klingemann. I think most people would see me as a fairly rational person who mainly uses the left side of his brain. And that’s not entirely wrong, but when it comes to music, especially danceable music, I can’t help but move to it. If I look at that analytically, those movements are a projection of the complex patterns I perceive in a song onto the range of possibilities that my skeleton and muscles allow. The body is also a kind of multidimensional system, and gestures and sequences of movement are their own language.

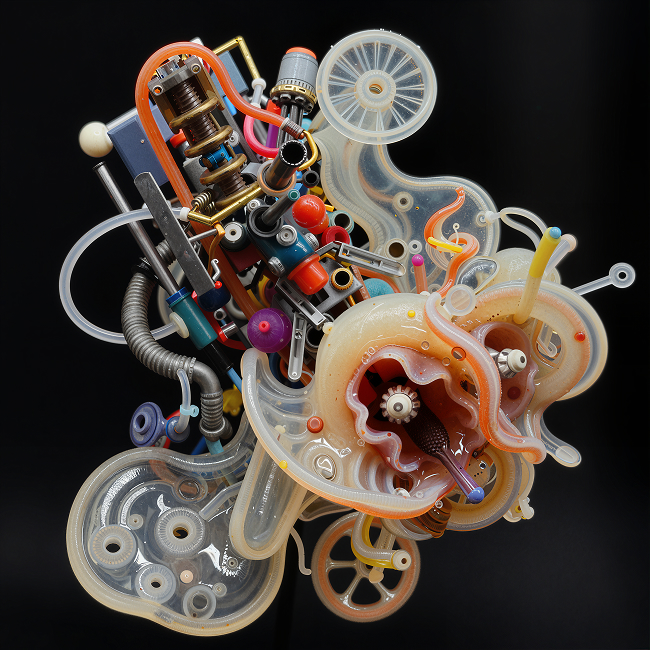

Generative models, especially GANs like the ones I used in 2020 for Das Atom, are systems that transform a multidimensional input, simply put a series of a few hundred numbers, into an image. And just as you can’t bend a body arbitrarily, GANs also follow certain rules, in this case mathematical rather than physical ones. Just as I can move my hand from one point in space to another, I can guide GANs from one position in latent space to another and get a continuous movement and a tangible sense of dynamics, which, however, do not have to obey the laws of the physical world. For me, that is exactly the element of surprise that I am always hoping to find in my practice.

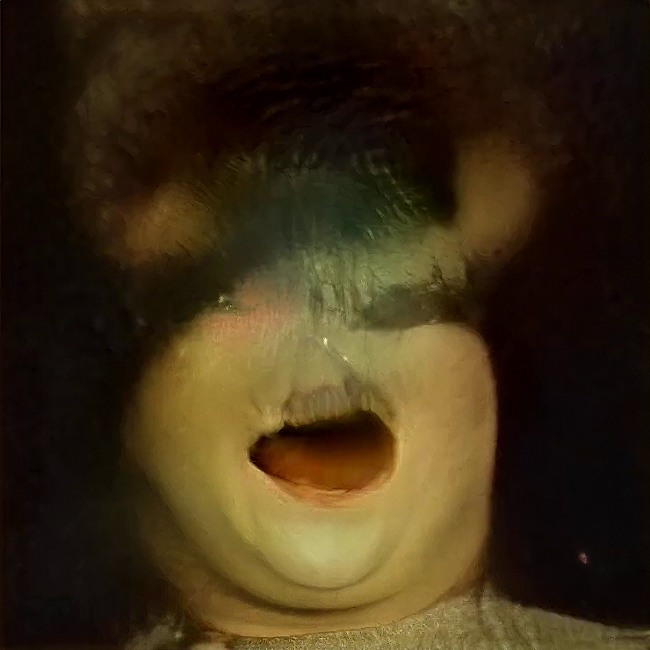

Mario Klingemann, Das Atom., frame_004775_bloom_6x.

Anika Meier. How did you technically connect the music to the model?

Mario Klingemann. The model for Das Atom was StyleGAN2, developed by NVIDIA, trained on faces, and freely available. Since in 2020 there was no model that allowed for generating, deforming, or moving full human bodies at the same quality as faces, I tried to translate music into head movements, facial expressions, or different identities and was simply curious to discover what would be possible.

For the video, I programmed a system that first analyzes the music and extracts information: the energy in different frequency ranges, rhythm, and melody are translated into numbers, which I then made accessible and isolated across multiple channels, allowing me to connect them to different “inputs” in my GAN. These inputs also had to be created first.

In the case of StyleGAN faces, you could compare the whole setup to a marionette with hundreds of strings attached. If I pull one, the eyes move to the left, the mouth opens, and the hair grows. Another string makes the person ten years older and turns the head slightly upward to the left. The first problem to solve was figuring out which strings to pull and by how much, or which to loosen, so that in the end only the head moves or only the mouth opens. Once I had identified several dozen of these latent directional vectors, the next step was to connect the musical information to these “strings” and let my facial marionette dance and age.